How to Implement Enterprise AI Without Disruption

by Ali Rind, Last updated: May 14, 2026 , ref:

Most enterprise AI implementation guides on the web are written for the wrong audience. They assume cloud-by-default, generic data, and a compliance review that takes a week. None of that holds in healthcare, law enforcement, government, legal, or financial services. In those environments, the implementation question is not "how fast can we ship" but "how do we ship without breaking what's already working."

This roadmap is for that question. It covers the deployment model decisions you have to make before kickoff, the phased framework that gets you from kickoff to production, the compliance integration that runs in parallel, and the operational disruption you can avoid by sequencing the rollout correctly.

It's written for CIOs, IT directors, and compliance leaders who are scoping or about to scope an enterprise AI implementation.

Key takeaways

- The biggest implementation decision is not which AI features to use. It's which deployment model fits your compliance posture.

- Four deployment models cover most regulated and enterprise environments: SaaS, private cloud, government cloud, and on-premises. The right choice depends on data residency, regulatory framework, and existing infrastructure.

- A phased rollout of one workload on one workflow ships in 8 to 10 weeks on SaaS, 10 to 12 weeks on private cloud, and 12 to 16 weeks on government cloud or on-premises. Multi-workflow rollouts compress on the same foundation once identity and storage are in place.

- Compliance review timelines are usually longer than technical build timelines in regulated industries. Plan the legal track in parallel with the technical track from day one.

- Production deployments that skip the pilot phase fail at roughly the same rate as those that skip change management. Both phases exist because the other one isn't enough.

Why generic AI implementation frameworks fall short in regulated environments

The standard enterprise AI implementation roadmap looks the same across every vendor blog: vision, readiness, pilot, scale, monitor. The framework is fine. It just doesn't survive contact with a CJIS auditor, a HIPAA privacy officer, or a procurement team that requires FedRAMP authorization before any data leaves the building.

Three constraints reshape implementation in regulated industries.

Data cannot leave defined boundaries

A police department running a CJIS-compliant workflow cannot send body-cam footage to a public AI service. Maintaining chain of custody on digital evidence requires the AI processing layer to sit inside the same controlled boundary as the evidence storage. A hospital cannot expose PHI to a model that trains on inputs. A federal agency cannot store sensitive data outside FedRAMP-authorized infrastructure. This rules out the deployment model that most generic AI guides assume.

Compliance review is a parallel track, not a checkbox

Legal, privacy, and compliance review on a regulated AI deployment can run 4 to 12 weeks. If you start that track after the technical build is done, you have just doubled your timeline.

Operational disruption is unacceptable in mission-critical workflows

A 911 dispatch center cannot pause to switch evidence systems. A hospital cannot stop accepting claims while you migrate to a new document processing pipeline. Implementation has to happen alongside live operations, not in place of them.

The phased framework below is built around these three realities. It works for non-regulated enterprises too; it just has slack in places where regulated environments don't.

The deployment model decision (before anything else)

Before you talk about phases, you have to settle on where the platform actually runs. Four options cover most enterprise AI deployments:

SaaS (multi-tenant or dedicated)

Hosted on the vendor's infrastructure. Fastest to deploy, suitable for most enterprise customers without strict data-residency requirements. Vendors should support SOC 2 and GDPR compliance requirements through their deployment architecture, encryption, and audit controls, and hold ISO 27001 (or equivalent) certification.

Private cloud

The customer's own cloud tenant (Azure, AWS, or other). Data stays inside the customer's cloud boundary. Suitable for organizations with cloud-first IT but strict data-isolation requirements.

Government cloud

FedRAMP High and CJIS-aligned environments such as Azure Government Cloud or AWS GovCloud. The default for federal civilian agencies, defense contractors, state law enforcement, and any organization handling criminal justice information.

On-premises (including air-gapped)

Full stack runs in the customer's data center with no external API calls. Required for classified environments, some defense workloads, and organizations whose policy explicitly forbids cloud processing for certain data classes.

The decision is not made by IT alone. Compliance, legal, and security all have veto power. The implementation team that names the right decision-makers in week one is the team that doesn't have to redo the deployment model decision in week six.

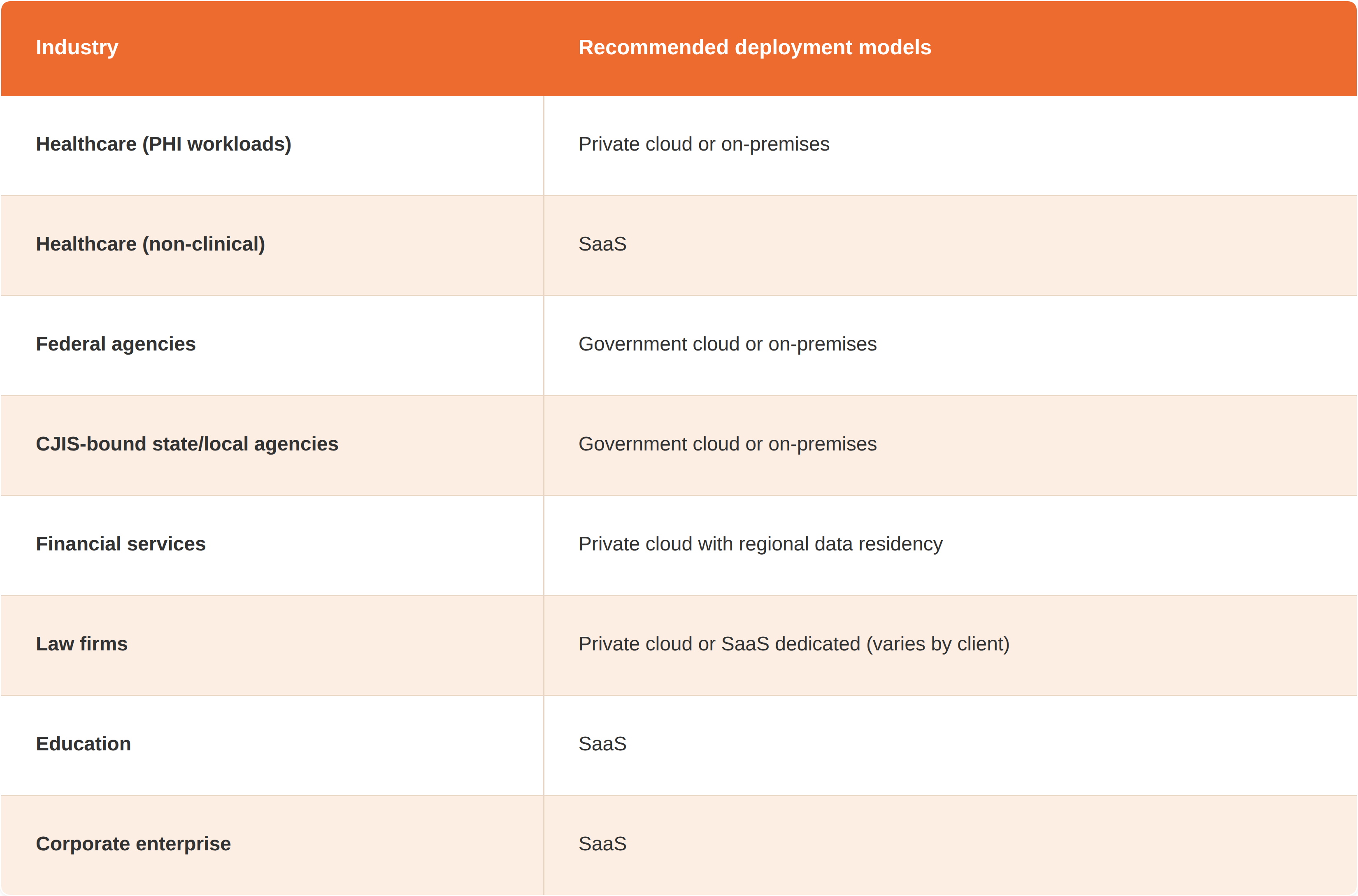

A quick reference for which deployment model fits which industry:

Before you start: the pre-implementation checklist

This is the work that happens before kickoff. Treat it as gating, not optional. The broader prep work (data governance, workforce AI literacy, executive buy-in) is covered separately in our organizational AI readiness guide. The implementation-specific version is shorter:

- Identity and SSO ready. Microsoft Entra ID, Okta, or your IdP needs to be available for integration. If SSO isn't in place, that's a separate project that runs first.

- Data sources mapped. Where is the source video, document, audio, or evidence today? Which systems will the AI platform need to read from or write to?

- Compliance owner named. One person who can speak for legal, privacy, and security on this deployment. Not three people. One.

- Pilot workflow scoped. One workflow, one team, one measurable before-and-after metric. Not five workflows. Our enterprise AI use cases guide covers the 15 workflow patterns most regulated organizations pilot first.

- Success metric defined. Time saved per FOIA response, reduction in evidence review hours, average time to clinical training completion. Pick one. Generic "improve productivity" doesn't qualify.

Organizations that skip this checklist usually re-do most of it in weeks 2 to 4 of the implementation, which costs the same time twice.

The five-phase implementation framework

A typical single-workload deployment on a defined workflow runs 8 to 10 weeks on SaaS and 10 to 12 weeks on private cloud. The phases below are sequential, but compliance review runs in parallel from phase one.

Phase 1: Discovery and scoping

Workshop with the deployment team to confirm deployment model, identity strategy, pilot workflow, and success metrics. The compliance owner joins this phase, not a later one. Most failed deployments trace back to a phase-one shortcut.

Deliverables: signed-off deployment architecture, identity integration plan, pilot scope document, compliance review timeline.

Phase 2: Infrastructure and identity

Stand up the environment (SaaS provisioning, private cloud setup, government cloud configuration, or on-premises install). Wire in SSO, configure encryption, set retention policies, and connect to source storage.

For regulated deployments, the compliance review track is already running here. CJIS evidence-handling reviews, HIPAA BAA execution, and FedRAMP package alignment all happen in parallel with the technical build.

Deliverables: working environment with SSO, encryption verified, audit logging enabled, integration points tested.

Phase 3: Pilot

One product, one workflow, one user team. The pilot is the moment to find out whether the assumptions in phase one survive contact with real data and real users.

Right-sized pilots share a few traits: one user team rather than the whole organization, a defined data set rather than the full corpus, and a measurable success metric the team can defend in a budget review. A few examples that work:

- A RAG-based AI agent grounded on a defined document set for one user group, such as a legal team's contract library or an IT helpdesk's policy docs

- Migration of one video content library (training, town halls, or compliance content) with AI transcription and search enabled for one department

- Body-cam evidence ingestion from one precinct with chain-of-custody logging and AI transcription running end to end, which is the common entry point for small departments working through FOIA backlogs before scaling to the wider agency

- One redaction workflow, such as body-cam FOIA response or HIPAA disclosure prep, with human reviewers in the loop

The pilot ends with a measurable before-and-after on the success metric defined in phase one.

Phase 4: Production deployment

If the pilot succeeded, production deployment expands the same workflow to the full user base. The platform configuration is already proven, so this phase is mostly about scaling user access, configuring retention and archival, finalizing audit reporting for compliance, and training the broader team.

Production governance starts here. Who can approve new use cases on the platform, who reviews AI outputs for quality, and who owns the operational metrics from this point on.

Phase 5: Scale across workflows

After the first production workflow is stable, additional workflows extend off the same foundation. A customer that pilots body-cam evidence intake often adds FOIA redaction next, then case-evidence search. Each new workflow takes weeks rather than months, because identity, storage, and compliance posture are already in place.

This is also where multi-product deployments earn their economics. The marginal cost of adding a second AI workload on the same platform is much lower than standing up a second vendor.

Implementation considerations by AI workload

Implementation differs across AI workload types. The general framework above applies, but each workload has its own setup considerations that change the pilot scope.

Conversational AI agents and RAG-based search

The longest configuration phase is data grounding. Retrieval-augmented generation (RAG) agents need a defined corpus (documents, transcripts, evidence, or media), correct access controls on that corpus, and a tested embedding pipeline before the agent goes live. Skipping the grounding step is the most common reason early AI agent pilots return generic or wrong answers.

Scope agents tightly. A legal team's clause-library agent works; a "legal AI assistant for everything" doesn't. Narrow scopes ship; broad scopes stall.

Intelligent document processing

The work is in sample documents and routing rules. The model needs sample documents from each form type the team wants to extract, plus the downstream routing rules that send the structured output into the correct system (CRM, claims platform, case management, ERP).

The pilot typically runs on one form type before expanding. Insurance claims intake, healthcare billing, KYC verification, and contract data extraction all follow this same one-form-then-scale pattern.

Custom workflow automation

Workflow automation needs business-rule documentation before model chaining can be configured. A claims pipeline that extracts data, classifies risk, redacts PII, summarizes evidence, and routes to an adjuster has five model handoffs. Each one needs a documented rule the team can audit later.

Workflows that automate manual review steps need a human-in-the-loop checkpoint until output quality is verified. Removing the human checkpoint is a phase-five decision, not a phase-three one.

Video knowledge management

The implementation work is usually ingestion and identity. Ingesting an existing video library (Zoom recordings, Microsoft Stream archives, old LMS content, ad-hoc cloud storage) into an AI-powered video platform needs format normalization and metadata mapping. AI transcription and semantic search then run automatically across the ingested library, replacing the manual tagging that most organizations were doing before.

LMS integration through SCORM 1.2/2004 or LTI 1.3 is configured during phase two if the customer's training workflow needs it. Note that LMS standards support and eCDN delivery are often gated to higher pricing tiers, so verify availability against your selected plan during scoping. eCDN setup is added for large-scale live streaming deployments.

Digital evidence management

Evidence management implementation is anchored on chain-of-custody and evidence ingestion. The team configures evidence intake from each source (body-cam upload, CCTV import, interview recording, witness statements), maps role-based access to case and unit structures, and sets retention policies aligned to the agency's records schedule.

CJIS-aligned deployment is the default for law enforcement. Government cloud or on-premises are the typical options. The compliance review track runs parallel to the technical build because CJIS evidence-handling sign-off is needed before production go-live.

AI redaction at scale

Redaction implementation depends on the redaction workflow. Body-cam and surveillance video redaction needs an upload or ingest path, AI model configuration for the object types being redacted (faces, license plates, screens), and a human review interface for the redaction analyst.

Document redaction for HIPAA, PCI-DSS, or PII workflows uses the same human-in-the-loop pattern but with different model configuration. The HIPAA redaction rules under the Privacy Rule define what has to be removed and what can stay, and the model configuration follows from there. Audio redaction for body-cam audio or recorded calls is configured similarly.

Throughput planning matters more for redaction than for the other workloads. A small agency redacting 5 to 10 videos per week has a different deployment than an attorney general's office redacting hundreds of videos per month for litigation production. The pilot should match the team's expected steady-state volume.

Compliance integration at each phase

Regulated deployments need compliance touchpoints at every phase, not just at the end.

Phase 1 (Discovery)

Identify which regulatory frameworks apply. Confirm deployment model meets each one. Compliance owner signs off on the architecture.

Phase 2 (Infrastructure)

Execute BAAs for HIPAA workloads, confirm CJIS deployment posture, validate FedRAMP authorization where required. Encryption, audit logging, and retention policies are configured to match the regulatory floor.

Phase 3 (Pilot)

Compliance owner reviews pilot scope and approves the pilot user group. Audit logs are validated mid-pilot to confirm the platform is producing the records required for inspection or subpoena.

Phase 4 (Production)

Compliance review of the production rollout. Final approval on access policies, retention, and incident response procedures.

Phase 5 (Scale)

Each new workflow goes through abbreviated compliance review since the underlying platform is already approved. New regulatory frameworks (a new state law, a new industry rule) trigger a fresh review.

The NIST AI Risk Management Framework is the U.S. reference for governance practices in regulated deployments. The FBI CJIS Security Policy is the controlling document for law enforcement, and the HHS Office for Civil Rights guidance covers HIPAA. Organizations in the EU should reference the EU AI Act for the AI-specific overlay on existing GDPR obligations.

Common implementation pitfalls and how to avoid them

The research on why enterprise AI projects fail is consistent. Gartner predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data. The implementation patterns below show up across deployments that stall or extend past timeline. Each one is preventable.

Skipping the pilot

Going straight from infrastructure to production deployment usually fails. The pilot exists because real data and real users surface integration issues that the architecture review doesn't catch.

Running compliance review serially after the technical build

This doubles the timeline. Compliance review should start in week one and run parallel to the technical track.

Over-scoping the first workflow

"Roll out AI across the agency" is not a pilot. "Cut FOIA video redaction time by 75% for the public records team by end of Q2" is a pilot. The second one is the kind that gets to a second budget cycle.

Underestimating identity integration

SSO setup looks simple on paper. It is usually the largest single technical task in phase two, especially when the customer is integrating with a legacy IdP or running federated identity across multiple agencies.

Treating change management as optional

The analysts whose workflows are being automated need to be part of the pilot, not surprised by it. Teams that involve their FOIA analysts, evidence custodians, and claims processors in the pilot phase have the smoothest production rollouts.

Choosing the wrong deployment model under time pressure

SaaS is faster to deploy. It is also the wrong choice for some regulated environments. A SaaS deployment that has to be migrated to government cloud six months later is the most expensive implementation pattern there is.

How VIDIZMO supports enterprise AI implementation

VIDIZMO's product portfolio covers the four AI workload categories most commonly deployed in regulated and enterprise environments, all on a shared platform with the same identity, storage, and compliance foundation.

- AI Intelligence Hub for conversational AI agents grounded in private enterprise data, intelligent document processing, custom workflow automation, and multimodal analytics across video, audio, documents, and images.

- EnterpriseTube for AI-powered video knowledge management with transcription, semantic search, chaptering, summarization, and compliance training analytics. EnterpriseTube includes a built-in eCDN for bandwidth-optimized streaming, and SCORM 1.2/2004 and LTI 1.3 integration is available on EnterpriseTube's Ultimate tier.

- Digital Evidence Management System for chain-of-custody logging, AI-assisted evidence transcription and summarization, multi-source correlation, and FOIA response automation. Includes CaseBot, an AI investigation assistant for natural-language evidence search.

- Redactor for AI redaction across body-cam video, surveillance footage, audio, and documents, with human-in-the-loop review for compliance workflows.

For a closer look at what to deploy and where each workload pays off, see our companion post on enterprise AI use cases.

People Also Ask

A single-workload deployment runs 8 to 10 weeks on SaaS, 10 to 12 weeks on private cloud, and 12 to 16 weeks on government cloud or on-premises. Multi-workload rollouts compress on shared infrastructure once the first is live. The technical build is usually faster than the compliance review track in regulated environments, which is why both should run in parallel from day one.

The most common implementation risks are data readiness (Gartner predicts 60% of AI projects will be abandoned through 2026 due to AI-unready data), over-scoping the first workflow, running compliance review serially after the technical build, underestimating identity integration, and choosing the wrong deployment model under time pressure. Each one is preventable through phase-one discovery, but each one regularly extends timelines by months when skipped.

Yes, when the rollout is phased correctly. The pilot phase runs alongside existing workflows, with the production switchover happening only after the pilot proves the new workflow performs at least as well as the old one. This pattern works for evidence intake, FOIA response, claims processing, and clinical training without taking the existing workflow offline.

The decision is driven by regulatory framework and data residency, not by IT preference. CJIS-bound law enforcement and federal agencies typically run government cloud or on-premises. Healthcare organizations handling PHI usually go private cloud or on-premises. Financial services run private cloud with regional data residency configured. Education and corporate enterprise customers usually run SaaS. The deployment model decision is the most expensive one to get wrong, which is why it's the first decision in phase one.

About the Author

Ali Rind

Ali Rind is a Product Marketing Executive at VIDIZMO, where he focuses on digital evidence management, AI redaction, and enterprise video technology. He closely follows how law enforcement agencies, public safety organizations, and government bodies manage and act on video evidence, translating those insights into clear, practical content. Ali writes across Digital Evidence Management System, Redactor, and Intelligence Hub products, covering everything from compliance challenges to real-world deployment across federal, state, and commercial markets.

Jump to

You May Also Like

These Related Stories

How Security Firms Can Automate Redaction Across Multi-Site CCTV

15 Enterprise AI Use Cases in Video, Evidence, and Media Management

No Comments Yet

Let us know what you think