AI and Client Data Privacy for Lawyers: A HIPAA Compliance Guide

by Ali Rind, Last updated: May 15, 2026 , ref:

For small firm attorneys, AI tools promise real productivity gains: faster document review, automated drafting, quicker research. But AI and client data privacy for lawyers is not a hypothetical risk. It is an active compliance exposure that most attorneys underestimate.

Before you upload a client's medical records to an AI summarization tool or run a deposition transcript through a chatbot, two legal frameworks require your attention: HIPAA and attorney-client privilege. Both have direct implications for what you can and cannot do with client data in AI systems. Neither is solved by a browser privacy setting.

This guide explains the risks, what the law actually requires, and how to use AI in your practice without compromising client confidentiality or your compliance obligations.

Why Attorneys Are Turning to AI for Document Workflows

Personal injury attorneys and small firm practitioners deal with enormous document volumes: multi-hundred-page medical records, insurance correspondence, deposition transcripts, police reports, and discovery files. AI tools can significantly reduce that workload.

Large language model (LLM) assistants can summarize hundreds of pages in minutes, draft demand letters from bullet-point notes, identify key facts across discovery documents, and transcribe recorded statements. The productivity case is legitimate. The data privacy risk is equally real, and far less discussed in most bar guidance.

The Privacy Risk: What Happens to Data You Upload to AI Tools

Most consumer and enterprise AI tools operate on cloud infrastructure. When you upload a document for summarization or analysis, that document leaves your environment. Depending on the vendor's data use policy:

- The data may be stored on the vendor's servers after the session ends

- The content may be used to improve or retrain the AI model

- Third-party subprocessors may have access to the data

- Data residency cannot always be guaranteed

This matters directly for law firms handling client files. Medical records, insurance documents, and recorded statements contain protected health information (PHI) (patient names, diagnosis codes, treatment histories, prescription records, and health plan identifiers) along with other personally identifiable information (PII) protected under multiple legal frameworks.

Many attorneys assume that using incognito mode or disabling chat history resolves the data exposure problem. It does not. Incognito mode affects only your browser's local history. Disabling chat history may prevent the AI from referencing prior sessions, but it does not govern how the vendor processes or stores data on their servers.

What HIPAA Actually Requires for Law Firms Using AI

Can lawyers use AI for medical records? Yes, but only when proper safeguards are in place.

HIPAA applies to covered entities (healthcare providers, insurers, clearinghouses) and their business associates. A personal injury law firm that receives PHI from a healthcare provider as part of a client matter is typically operating as a business associate under HIPAA. That classification triggers specific obligations under the Security Rule.

Uploading identifiable PHI to a third-party AI tool without a Business Associate Agreement (BAA) in place is a HIPAA violation, regardless of which settings are enabled or how the upload is performed. For a detailed breakdown of what HIPAA requires and the penalties involved, see HIPAA Redaction: Protecting Patient Data Under the Privacy Rule.

HIPAA compliance for law firms using AI requires:

- A BAA with every vendor that receives or processes PHI on your behalf

- Confirmation that the vendor supports HIPAA-compliant deployment configurations

- Data minimization: transmitting only what is necessary for the intended use

- Documentation of how PHI is handled throughout the workflow

Most general-purpose AI tools, including widely used consumer chatbots, do not offer BAAs to individual users or small firms. Without a BAA, using those tools to process PHI creates direct HIPAA liability, regardless of the firm's size.

Attorney-Client Privilege Considerations with AI

Beyond HIPAA, attorney-client privilege adds a separate layer of risk. Privilege protects confidential communications between attorneys and clients from disclosure. One of the primary ways privilege is waived is by disclosing confidential information to a third party without authorization.

When you upload a client file to a third-party AI service, you are transmitting that information outside the attorney-client relationship. Whether that transmission constitutes a waiver of privilege is an evolving legal question, but bar associations in several jurisdictions have issued guidance cautioning attorneys to:

- Understand data retention and usage policies before using any AI tool with client data

- Obtain client consent before transmitting confidential information to AI platforms

- Avoid tools that train on user-submitted content without restriction

The governing principle is ABA Model Rule of Professional Conduct 1.6, which requires attorneys to make reasonable efforts to prevent inadvertent disclosure of client information. Using AI tools without understanding the vendor's data flow does not meet that standard. For a broader look at how these obligations apply to multimedia evidence, see VIDIZMO's legal redaction software for legal proceedings.

How to Use AI Compliantly: Redact First, Then Process

The most effective path to compliant AI use in your law firm is also the most direct: remove PHI and PII from documents before they reach any AI tool.

This redact-first workflow is straightforward in practice:

- Identify all PHI and PII in the document: patient names, dates of birth, Social Security numbers, diagnosis codes, provider names, insurance identifiers, and health plan numbers

- Redact those identifiers so they cannot be read or reconstructed from the submitted file

- Upload the de-identified document to the AI tool for summarization, drafting, or analysis

- Use the AI output for your legal work

- Retain the original with full PHI intact in your secure case management system

The file that reaches the AI contains no PHI. Even if the vendor's data handling is imperfect, there is nothing to expose. This approach satisfies HIPAA's data minimization principle and substantially reduces the privilege risk of transmitting client information to third parties.

To understand how selective redaction works in practice (removing only the identifiers you need to remove while keeping clinical or legal context intact), see Selective PII Redaction: Target Specific Data Types Without Over-Redacting.

What a Compliant AI Workflow Looks Like for a PI Firm

A personal injury firm receiving medical records from treating physicians, hospitals, and imaging centers will typically handle:

- Multi-hundred-page PDF medical records with embedded PHI

- Handwritten physician notes in scanned files

- Audio recordings of client statements or recorded calls

- Deposition transcripts that reference medical history

A compliant workflow for this environment follows four steps:

Step 1: Secure storage. Client files are stored in a secure case management or document management system, encrypted at rest.

Step 2: Automated redaction. Before any file is routed to an AI tool, it passes through a redaction layer. PHI categories are detected and removed automatically (patient names, dates, diagnosis codes, provider identifiers, and insurance numbers) across PDFs, scanned documents, and audio transcripts.

Step 3: AI processing. The de-identified version is submitted to the AI tool for summarization, chronology drafting, or damages analysis.

Step 4: Original preservation. The original, unredacted file remains secured in the firm's system. The redaction layer generates a clean copy without altering the source document, maintaining chain of custody while enabling AI-assisted work.

This workflow gives attorneys access to AI productivity gains without exposing client PHI to third-party platforms. For a deeper look at how this applies specifically to audio and recorded statements, see PHI Redaction for Telehealth Recordings: A HIPAA Compliance Guide.

How VIDIZMO Redactor Enables This Workflow

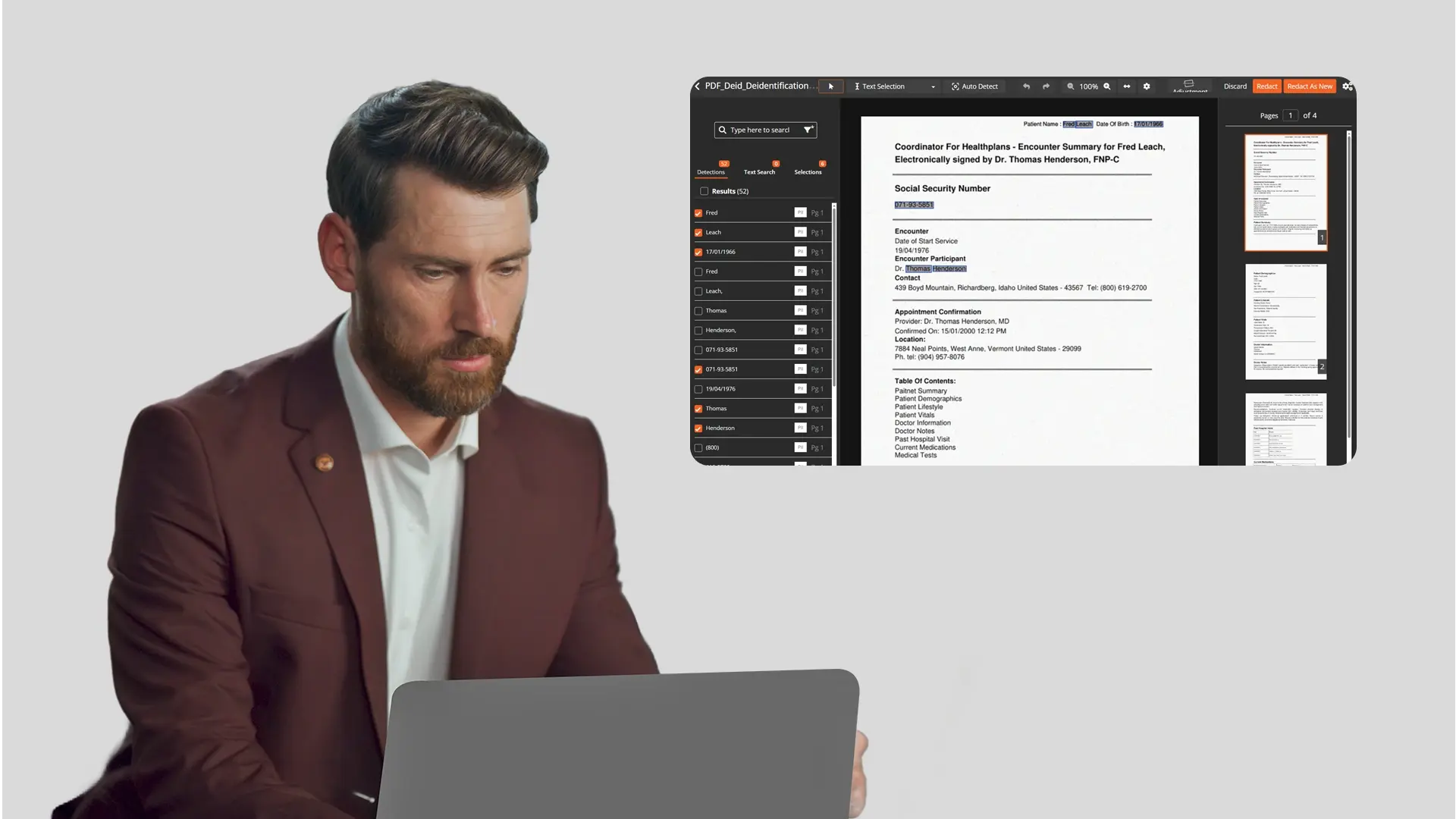

VIDIZMO Redactor provides the automated redaction layer that makes the redact-first workflow practical for small and mid-size law firms. Two capabilities are directly relevant to legal document workflows:

PHI and PII detection across document formats. Redactor processes PDFs, scanned documents, and Word files using optical character recognition (OCR), detecting and removing 40+ PHI and PII types: patient names, dates of birth, Social Security numbers, diagnosis codes, provider identifiers, and insurance information. Handwritten physician notes are handled through intelligent character recognition (ICR), an important capability for processing the scanned physician notes common in PI case files. See how AI-powered redaction works across all file types for the full format coverage.

Audit trail with original preservation. Every redaction action is logged: what was redacted, when, and by whom. Redacted copies are generated while originals remain intact in the system. This chain-of-custody model aligns with HIPAA's data minimization requirements and supports defensibility in the event of a bar inquiry or compliance review. For more on how this applies to legal e-discovery and evidence workflows, see how to redact legal documents in 2026.

See how VIDIZMO Redactor protects client data before it reaches any AI tool. Request a demo to see the redact-first workflow in action.

Conclusion

AI tools offer real efficiency gains for law firms, but AI and client data privacy for lawyers must be addressed structurally, not as an afterthought. HIPAA compliance for law firms using AI is not satisfied by disabling chat history or using private browsing. It requires knowing where PHI goes, limiting what is transmitted, and ensuring any vendor that handles PHI operates under a proper Business Associate Agreement.

The redact-first workflow is the most reliable path to using AI compliantly. By removing PHI and PII before documents reach any AI platform, attorneys protect client confidentiality, reduce HIPAA exposure, and address the privilege risk of transmitting client information to third parties.

People Also Ask

Yes, but only when PHI is properly protected. Using AI tools to process identifiable medical records without a Business Associate Agreement and appropriate safeguards creates HIPAA liability. The compliant approach is to redact PHI from documents before submitting them to any AI tool.

No. Incognito mode affects only your browser's local history. It does not govern how the AI vendor processes, stores, or uses data submitted during a session. HIPAA-compliant AI for attorneys requires contractual and technical safeguards at the vendor level, not browser-side settings.

PHI redaction before AI processing means removing all protected health information (patient names, dates of birth, diagnosis codes, and insurance identifiers) from a document before it is submitted to any AI tool. This ensures no identifiable client data reaches the AI platform, satisfying HIPAA's data minimization principle and reducing attorney-client privilege risk.

Law firms that receive PHI from covered entities as part of legal representation are typically classified as business associates under HIPAA. This means they are subject to HIPAA's Security Rule requirements, including safeguarding PHI and establishing Business Associate Agreements with any vendor that processes PHI on their behalf.

About the Author

Ali Rind

Ali Rind is a Product Marketing Executive at VIDIZMO, where he focuses on digital evidence management, AI redaction, and enterprise video technology. He closely follows how law enforcement agencies, public safety organizations, and government bodies manage and act on video evidence, translating those insights into clear, practical content. Ali writes across Digital Evidence Management System, Redactor, and Intelligence Hub products, covering everything from compliance challenges to real-world deployment across federal, state, and commercial markets.

Jump to

You May Also Like

These Related Stories

How to Redact PHI from Telehealth Session Recordings

How to Redact PHI from SaaS Product Demo Videos

No Comments Yet

Let us know what you think