How Healthcare AI Companies Share De-Identified Data Safely

by Ali Rind, Last updated: March 18, 2026 , ref:

Healthcare AI companies routinely need to share clinical data with research partners, hospitals, and third-party organizations. Training AI models, validating algorithms, and conducting multi-site clinical studies all require access to patient data. But sharing that data without proper de-identification creates serious HIPAA exposure.

The challenge is not whether to share data. It is how to share it in a way that satisfies federal requirements while preserving enough clinical utility for the data to remain useful.

Why Healthcare AI Companies Face Unique Data Sharing Risks

Unlike traditional healthcare providers who generate and store patient data within a single system, healthcare AI companies often operate as intermediaries. They receive data from hospitals and clinics, process it through AI models, and share results or datasets with downstream partners.

Each handoff introduces compliance risk. Data that was properly protected within a hospital's EHR becomes a liability the moment it moves to an external system without adequate de-identification. And HIPAA enforcement does not distinguish between the organization that generated the data and the one that failed to protect it during transfer.

Common data sharing scenarios that require de-identification include sharing annotated imaging datasets with AI model training partners, providing clinical trial data to sponsors and regulatory bodies, exchanging patient outcome data with research collaborators, publishing de-identified datasets for peer review or validation studies, and transferring clinical records between institutions for multi-site studies.

HIPAA's Two De-Identification Standards

HIPAA provides two legal methods for de-identifying protected health information. Each has different requirements, costs, and practical implications.

Safe Harbor Method

The Safe Harbor method requires removal of 18 specific identifier categories from the data:

- Names

- Geographic data smaller than a state

- Dates (except year) related to an individual

- Phone numbers

- Fax numbers

- Email addresses

- Social Security numbers

- Medical record numbers

- Health plan beneficiary numbers

- Account numbers

- Certificate/license numbers

- Vehicle identifiers and serial numbers

- Device identifiers and serial numbers

- Web URLs

- IP addresses

- Biometric identifiers

- Full-face photographs and comparable images

- Any other unique identifying number or code

The organization must also have no actual knowledge that the remaining information could identify an individual. Safe Harbor is the more commonly used method because it provides a concrete checklist rather than requiring statistical expertise.

Expert Determination Method

The Expert Determination method requires a qualified statistical or scientific expert to determine that the risk of identifying any individual from the data is very small. The expert must document their methods and results. This approach allows retention of more data elements (which can preserve clinical utility) but requires specialized expertise and is more expensive to implement.

Most healthcare AI companies rely on Safe Harbor because it is more operationally straightforward, especially when processing data at scale. The 18-category checklist translates directly into redaction rules that AI-powered tools can automate.

Data Sharing Agreements and BAAs

De-identification alone does not eliminate all compliance obligations. Organizations sharing clinical data also need proper legal frameworks in place.

Business Associate Agreements (BAAs)

Any organization that handles PHI on behalf of a covered entity must execute a BAA before receiving the data. This applies to healthcare AI companies receiving data from hospitals, CROs processing clinical trial documents, and cloud service providers hosting PHI.

A BAA establishes what PHI the business associate will access, how they will protect it, what happens in case of a breach, and the obligations for returning or destroying PHI when the engagement ends.

Data Use Agreements (DUAs)

For de-identified data (where HIPAA's PHI protections technically no longer apply), organizations typically still execute Data Use Agreements that specify permitted uses, prohibit re-identification attempts, and define data retention and destruction requirements.

Audit Trail Requirements

Regulatory bodies and research partners increasingly require documented proof that de-identification was performed correctly. This means maintaining records of what data was processed, which identifiers were detected and removed, who reviewed the redaction results, when the de-identification was completed, and what tools and methods were used.

Without these records, an organization cannot demonstrate compliance during an audit or investigation, even if the de-identification itself was thorough.

Building a Compliant Data Sharing Workflow

A practical workflow for sharing de-identified healthcare data includes these stages.

1. Data Classification

Before any processing, classify incoming data by sensitivity level. Determine which datasets contain PHI, what types of identifiers are present, and what the intended use of the shared data will be.

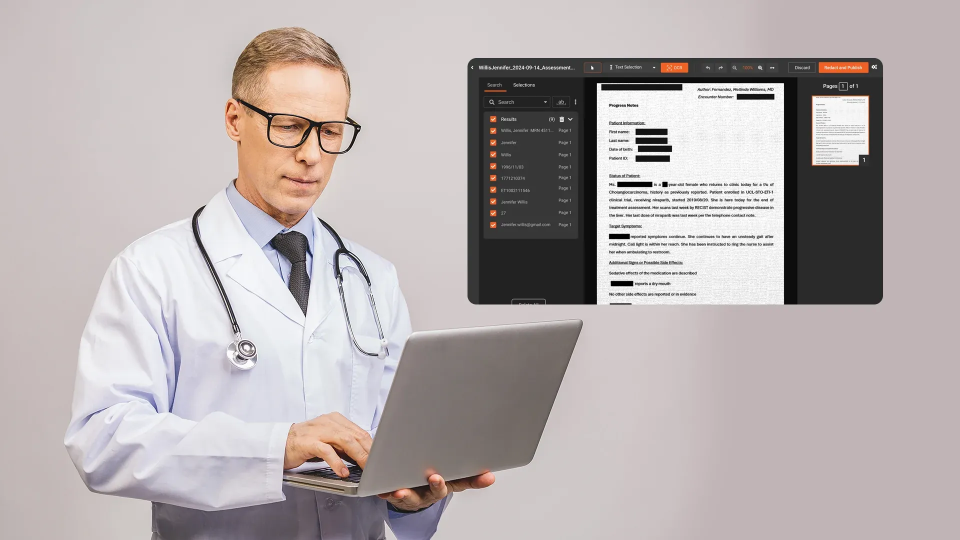

2. Automated PHI Detection and Redaction

Use AI-powered redaction to identify and remove PHI across documents, images, and clinical records. Automated detection should cover all 18 Safe Harbor identifier categories, with particular attention to unstructured text where names, dates, and provider identifiers appear in free-form narratives.

3. Human Review

AI detection is not perfect, particularly for edge cases like unusual name formats, embedded identifiers in clinical narratives, or context-dependent data. Human reviewers should verify AI detections, especially for high-risk datasets being shared externally.

4. Quality Assurance

Before releasing any dataset, run a final QA pass to confirm that no PHI remains. This is especially important for datasets that will be shared publicly or with multiple partners.

5. Audit Documentation

Generate and store audit records documenting every step of the de-identification process. These records should be immutable and accessible for regulatory review.

6. Secure Transfer

Use encrypted transfer methods for sharing de-identified data. Even though the data is technically no longer PHI under HIPAA, best practices and most DUAs require encryption in transit.

Building Trust Through Verifiable De-Identification

Compliant data sharing in healthcare AI is not just a legal requirement. It is a competitive advantage. Partners, sponsors, and regulatory bodies need confidence that shared data has been properly de-identified and that the process is documented and auditable.

VIDIZMO Redactor supports HIPAA-compliant redaction workflows with AI-powered detection of 40+ PII and PHI types, comprehensive audit trails documenting every redaction decision, and BAA/DPA support for healthcare deployments. The platform processes documents, images, and clinical records in a single workflow, with configurable confidence thresholds and human review capabilities for high-stakes data sharing.

Build confidence in your healthcare AI data pipelines. See how VIDIZMO Redactor ensures auditable, compliant de-identification.

Request a free trial today! No credit card required.

Key Takeaways

-

HIPAA provides two de-identification methods: Safe Harbor (remove 18 identifier categories) and Expert Determination (statistical expert analysis). Most healthcare AI companies use Safe Harbor for operational simplicity.

-

BAAs must be in place before sharing PHI with any business associate. Data Use Agreements should govern de-identified data sharing.

-

Audit trails documenting every de-identification step are essential for regulatory compliance and partner trust.

-

AI-powered redaction automates Safe Harbor compliance across documents and clinical records, but human review remains necessary for edge cases.

-

Secure, encrypted transfer is a best practice even for de-identified data.

People Also Ask

De-identified data has had specific identifiers removed per HIPAA standards (Safe Harbor or Expert Determination). Anonymized data goes further, making re-identification statistically impossible. HIPAA uses the term "de-identified" rather than "anonymized."

Technically, fully de-identified data is no longer PHI under HIPAA, so a BAA is not legally required. However, most organizations still execute Data Use Agreements to govern how de-identified data is used and to prohibit re-identification.

Yes, in some cases. Research has shown that combining de-identified datasets with publicly available data can enable re-identification. This is why Safe Harbor requires that the organization has no actual knowledge that remaining data could identify someone.

HIPAA violations for improperly handling PHI can result in fines ranging from $100 to $50,000 per violation, with annual maximums up to $1.5 million per violation category. Criminal penalties can include imprisonment.

If a healthcare AI company handles PHI on behalf of a covered entity (hospital, insurer, provider), it is a business associate under HIPAA and must comply with HIPAA requirements, including proper de-identification before sharing data.

The Safe Harbor method requires removal of 18 specific identifier categories (names, dates, SSNs, medical record numbers, etc.) from data and that the organization has no actual knowledge that remaining information could identify an individual.

About the Author

Ali Rind

Ali Rind is a Product Marketing Executive at VIDIZMO, where he focuses on digital evidence management, AI redaction, and enterprise video technology. He closely follows how law enforcement agencies, public safety organizations, and government bodies manage and act on video evidence, translating those insights into clear, practical content. Ali writes across Digital Evidence Management System, Redactor, and Intelligence Hub products, covering everything from compliance challenges to real-world deployment across federal, state, and commercial markets.

Jump to

You May Also Like

These Related Stories

AI and Client Data Privacy for Lawyers: A HIPAA Compliance Guide

HIPAA-Compliant Video Training for Clinical Staff: What Platform Must Support

No Comments Yet

Let us know what you think