AI for Criminal Legal Research: a Practitioner's Guide for 2026

by Nadeem Khan, Last updated: April 29, 2026 , ref:

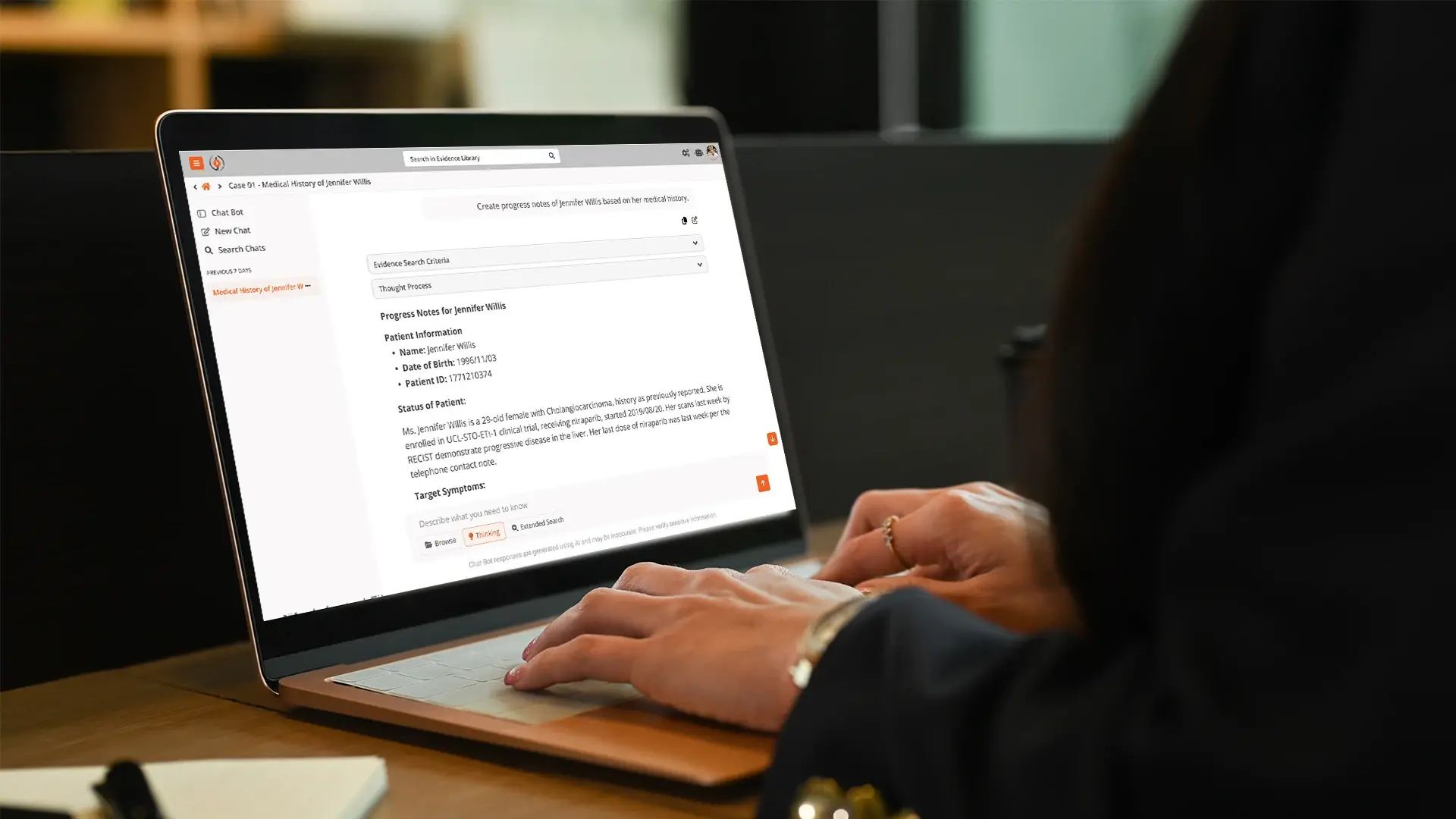

In 2025, the South Carolina Attorney General's office deployed an AI-assisted prosecution evidence search tool called CaseBot. Prosecutors there can ask in plain language, "find every reference to the firearm in these jail calls," and get back specific timestamps in specific recordings, with the audio cued to the exact moment they need. CaseBot is built on VIDIZMO Intelligence Hub and runs in a CJIS-aligned environment. It is one of the first publicly deployed AI tools in a state AG office doing real criminal-case work.

Most "AI for law" coverage in 2026 still treats criminal practice as a footnote to civil litigation. CaseBot is a useful counterexample, and the gap it points to is the through-line of this guide.

The category called "AI legal research" has actually split into two very different things. Westlaw Edge, Lexis+ AI, Casetext/CoCounsel, and Harvey were built for civil litigators drafting memos in BigLaw. They are not built for the line ADA inheriting a felony case with 300 GB of body-worn camera footage on Monday morning, or the public defender with a six-week trial date and a 12,000-page discovery production. Same vendor pitch, same RAG architecture, very different product reality. Telling which kind of "AI for law" a tool actually is matters more than its accuracy benchmark.

Key Takeaways

- AI criminal legal research now spans far beyond case-law search. It includes evidence triage, Brady and Giglio scanning, witness statement consistency, sentencing analytics, and drafting support.

- The 2024 Stanford RegLab "Hallucination-Free?" study found leading legal AI tools hallucinated on 17 to 33 percent of queries; general-purpose chat on 58 percent or more. That rate is disqualifying for primary criminal research.

- Civil-pedigree AI tools (Westlaw Edge, Lexis+ AI, Casetext, Harvey) underperform on criminal authority because their training weights civil precedent. Sentencing grids and jurisdiction-specific doctrine are where they fail most.

- Deployment model is the first filter for any office handling Criminal Justice Information. Public SaaS LLM endpoints generally violate the FBI CJIS Security Policy; on-premises and government-cloud deployments are the responsible path.

- Prosecutor and defender workflows differ enough that a one-size platform rarely serves both well. Evaluate against your actual case mix.

How AI gets criminal law wrong

Before talking about what AI does well, it is worth being specific about how it fails. Practitioner reports since 2024 have surfaced the same five failure modes:

- Fabricated case citations with real-sounding party names and plausible reporter numbers, the kind that has gotten attorneys sanctioned in dozens of reported decisions since Mata v. Avianca.

- Hallucinated statute subsections, especially around sentencing enhancements where the model splits or merges subsections that do not exist.

- Misstatements of mens rea requirements in jurisdiction-specific offenses; the model generalizes from the federal pattern when state law diverges.

- Confident summaries of cases whose holdings are the opposite of what the tool reports. This one is the most dangerous because it survives a quick read.

- Outdated law cited as current, particularly Fourth Amendment doctrine post-Carpenter v. United States.

The Stanford RegLab benchmark put numbers on this. Leading legal-specific AI tools hallucinated on 17 to 33 percent of queries. General-purpose chat hallucinated on 58 percent or more. For criminal work, where a hallucinated citation in a suppression motion is a sanctions risk and a client-welfare risk, those rates are disqualifying.

This is not an argument against AI in criminal practice. It is an argument for using the right kind of AI for the right task. Open-web generative chat is the wrong tool for authority research. Grounded, retrieval-based systems that cite their sources from a controlled corpus, and refuse to answer when the corpus does not support an answer, are a different animal with a different accuracy profile. Most of the sanctions cases involved the first kind. Most of the tools criminal practitioners are quietly using on real cases are the second.

Why criminal practice is different from civil

Four things separate AI for criminal practice from AI for civil. Each one breaks a tool that was not built with it in mind.

-

The data is mostly unstructured. Civil litigation runs on documents and email. Criminal cases run on video, audio, images, cellphone extractions, social media exports, jail call recordings, ALPR hits, and forensic reports. A platform that only indexes text cannot read most of the file. Westlaw Edge, Lexis+ AI, and Casetext are document-and-text platforms.

-

The authority is not just case law. Criminal practitioners work across statutes, sentencing guidelines, jury instructions, departmental policies, consent decrees, and state-specific evidence codes. A tool that retrieves cases but does not know your jurisdiction's sentencing grid has thin value for plea bargaining or sentencing memoranda.

-

The chain of custody is a hard constraint. Evidence processed, summarized, or transcribed by an AI system still has to hold up in court. Provenance, versioning, and auditability tie back to standards like NIST SP 800-86. Civil tools were not designed around this and frequently fail it.

-

The compliance environment is tighter. Criminal justice data is covered by the FBI CJIS Security Policy v5.9.5, which requires AES-256 at rest, TLS 1.2 in transit, fingerprint-based personnel screening, and strict audit logging. Sending criminal evidence to a commercial SaaS chat endpoint violates the policy in most agencies.

The platforms that serve criminal practice well are rarely the ones that serve general legal research well. The design tradeoffs are different.

Where AI actually earns its keep

The honest answer differs across prosecution and defense, and the high-value tasks are narrower than the marketing suggests.

On the prosecution side, evidence triage pays back faster than authority research. A line ADA inheriting 300 GB of body-worn camera footage, cellphone extractions, and jail calls does not need a better way to find State v. Smith. They need a way to find the five minutes of video where the defendant makes an admission and the two jail calls where the codefendant describes the plan. Sentencing analytics is the second high-value area; pattern recognition across prior dispositions gives plea bargaining a data floor that used to rely on institutional memory.

On the defense side, Brady and Giglio scanning is the most obvious win. When the discovery production is 40,000 pages and trial is in six weeks, a human-only review will miss things. A grounded AI system that flags potential exculpatory material with source citations into the file extends the defense team's reach without replacing attorney judgment. Witness statement analysis is close behind: cross-referencing grand jury testimony against preliminary hearing testimony against initial interviews is a task AI does quickly and does not get bored doing.

On both sides, transcription and translation of non-English jail calls, interview recordings, and intercepts is the unglamorous workstream both sides rely on most. Intelligence Hub publishes per-language word error rates: 3.5 percent for Spanish, 4.5 percent for English, around 18 percent for Arabic, higher for low-resource languages. A 96 percent accurate Spanish transcript is a workable starting point. A 70 percent Kannada transcript is triage only, and certified human translation is still required for courtroom use. Knowing the WER per language up front is the whole point. Platforms that do not publish it are asking you to trust them blind.

Where Does AI Actually Earn Its Keep in Criminal Practice?

The honest answer looks different on the prosecution and defense sides, and even within each, the high-value tasks are narrower than the marketing suggests.

On the Prosecution Side

The work where AI pays back the investment fastest is usually evidence triage, not authority research. A line ADA inheriting a new case with 300 gigabytes of body-worn camera footage, cell phone extractions, and jail calls doesn't need a better way to find State v. Smith. They need a way to find the five minutes of video where the defendant makes an admission, and the two jail calls where the codefendant describes the plan.

Sentencing analytics is a second high-value area. Pulling patterns from prior dispositions, across comparable charges and defendant histories, gives plea bargaining a data floor that used to rely on institutional memory. That memory walks out the door when senior prosecutors leave.

On the Defense Side

Brady and Giglio scanning is the most obvious win. When the discovery production is 40,000 pages and the trial is in six weeks, a human-only review will miss things. A grounded AI system that flags potential exculpatory material for attorney review, with source citations back into the file, extends the defense team's reach without replacing the attorney's judgment.

Witness statement analysis is a close second. Cross-referencing a complaining witness's grand jury testimony against their preliminary hearing testimony and their initial interview is a classic human task that AI does quickly and doesn't get bored doing. The attorney still decides what to do with the inconsistencies. Finding them stops being the bottleneck.

On Both Sides

Transcription and translation of non-English jail calls, interview recordings, and intercepts is the boring, unglamorous win that both sides rely on most. It doesn't make headlines. It makes cases move.

What Are the Ethical and Procedural Guardrails?

The ethics conversation has matured since 2023. Most state bars have now issued opinions, and a rough consensus has emerged around six principles worth naming.

- Competence includes understanding the tool. ABA Model Rule 1.1 extends to the technology a lawyer uses.

- Confidentiality constraints are unchanged. Loading privileged material into a public SaaS chat endpoint is a waiver risk under Model Rule 1.6.

- Supervision applies to AI output. Treat AI research like a first-year associate's memo. Read it. Check the citations.

- Disclosure obligations vary by court. A growing number of federal districts and state court systems require disclosure of AI-assisted filings.

- Brady obligations extend to AI-assisted review. Brady v. Maryland, 373 U.S. 83 (1963) does not care how you found what you found.

- Record retention applies. Prompt logs, query histories, and AI-generated drafts are case-file material in most jurisdictions.

A common mistake we've seen is treating AI output as draft work product that doesn't need to be preserved. That assumption fails the moment there's a post-conviction challenge or a discovery dispute about what the office knew and when.

How to evaluate a platform

Six criteria separate platforms that survive real cases from the ones that demo well:

-

Source grounding. Does every answer cite specific pages, timestamps, or documents? Ungrounded output is unusable in court.

-

Modality coverage. Can it process video, audio, images, and documents in one pipeline? Criminal evidence is rarely text-only.

-

Deployment model. Can it run on-premises, in government cloud, or air-gapped? CJIS rules often prohibit commercial SaaS LLMs.

-

Model flexibility. Can it use multiple LLMs, including self-hosted? Single-model lock-in ages badly.

-

Audit trail. Confidence scores, review checkpoints, full audit log? Attorney supervision duties do not disappear.

-

Data isolation. Is your data isolated from the vendor's commercial model training? Discovery and confidentiality risk.

The deployment question is the cheapest thing to get wrong and the most expensive to fix. If your office handles CJIS-regulated data, the first question is not which model has the highest accuracy. It is whether the platform can run in an environment that can legally touch the data.

A tool's demo case is its best case. Pilot on one of your own closed cases with messy real evidence (body-worn camera, a Cellebrite extraction, a few jail calls, scanned exhibits) and time the full workflow. Two cases tell you more than ten demos.

Where Intelligence Hub fits, and where it doesn't

Intelligence Hub is a multi-modal AI processing platform built for organizations applying computer vision, NLP, generative AI, and agentic RAG to unstructured data. For criminal practice, the case file spans every modality the platform handles: video, audio, images, and documents.

It fits well for prosecution offices with multi-modal evidence loads and active prosecutor review workflows; defense and AG offices that need Brady scanning, court exhibit preparation, post-conviction integrity review, case timeline generation, and criminal-case document drafting on one platform; jurisdictions that need CJIS-aligned Azure Government or on-premises deployment; and offices already using VIDIZMO DEMS, where Intelligence Hub reads directly from the case evidence store.

It does not fit as well for pure authority research, for very small offices wanting a 30-day SaaS pilot with no infrastructure conversation, or for offices whose evidence is overwhelmingly documents and email.

A serious AI tool for criminal practice has to match your evidence types, your deployment constraints, and your review workflow, in that order. Picking on accuracy benchmarks before those three is how procurement teams end up replatforming inside eighteen months.

CaseBot in practice

CaseBot is the cleanest reference deployment to point at because it is public and runs real prosecution work. The South Carolina AG's office put CaseBot into production in 2025 to help prosecutors search across multi-modal evidence on active cases.

What CaseBot does, narrowly:

-

Plain-language search across modalities. A prosecutor queries "every reference to the firearm in these jail calls" and gets exact-timestamp matches, not summaries.

-

Source-grounded answers only. If the corpus does not support an answer, CaseBot says so instead of hallucinating.

-

CJIS-aligned deployment. Runs in Azure Government Cloud; evidence never leaves a CJIS-cleared boundary.

CaseBot is not a Brady scanner, sentencing analytics tool, or brief drafter. It is a case-evidence search tool, narrowly scoped, and the narrow scope is part of why it works. Tools that try to do everything in criminal practice tend to do nothing well.

The general lesson: scope the use case tightly, ground every output in the case file, deploy where the data can legally live, and let attorneys verify before relying on output. Those four moves separate AI deployments that survive contact with real cases from the ones that get quietly turned off after the first sanctions hearing in another district.

People Also Ask

No, not as a primary research tool. Public SaaS chat creates confidentiality and CJIS compliance risks with criminal case data, and hallucination rates are too high for primary use.

It can surface potentially exculpatory material faster than human-only review, but it is a triage tool, not a substitute for attorney judgment. The Brady obligation does not shift to the vendor.

For CJIS-regulated data: on-premises, government cloud (Azure Government, AWS GovCloud), or hybrid. Commercial SaaS LLM endpoints usually violate agency rules.

Coverage varies. Intelligence Hub supports 82 languages with published per-language WER. Always ask for per-language error rates before planning workflows around transcription.

Requirements vary by court. A growing number of federal districts and state courts require disclosure. Check local rules and your state bar's current guidance before filing.

Getting started

The platforms that matter for criminal practice over the next 18 to 24 months will be the ones that ground every answer in a controlled corpus, handle every evidence modality, deploy where the data lives, and give attorneys the audit trail they need to sign the work product. The gap between general legal AI and criminal-practice AI will widen, not narrow.

For offices starting now, the right sequence is to pick one high-volume, high-pain use case (evidence triage, transcription, or Brady scanning), pilot on real closed-case data, measure accuracy against human-reviewed ground truth, and scale only what clears the bar. Avoid platform-wide rollouts. Avoid vendors who cannot show their hallucination rate on the task you actually need to do.

If you would like to see how Intelligence Hub handles one of your real workflows, talk to an AI specialist about a pilot on a closed case from your docket. We will be straight about whether it is a fit for your office or whether one of the alternatives in the comparison above is closer to your workflow.

About the Author

Nadeem Khan

Nadeem Khan is the CEO and co-founder of VIDIZMO, where he has led the company's growth from a video management startup into an AI-powered platform trusted by federal law enforcement, defense agencies, and Fortune 500 enterprises. He spearheaded the development of VIDIZMO's Digital Evidence Management System, now used by leading public safety agencies across North America. With over 25 years in enterprise software architecture and cloud infrastructure, Nadeem brings hands-on technical depth to every product decision. Before taking the CEO role, he served as CTO and Chief Architect at VIDIZMO and spent 17 years as Principal Consultant at Softech Worldwide, a Microsoft Gold Partner. He holds a BS in Electronics from NED University of Engineering and Technology.

No Comments Yet

Let us know what you think